LaMDA: What is it? How it is different?

Google Bard & LaMDA: The Language Model for Dialogue Applications, or LaMDA, is a relatively new development in the field of natural language processing that makes it possible for a machine to simulate human like conversations. The working model of LaMDA was introduced to the world by OpenAI. If you have used or aware of the recent ground breaking discovery called ChatGPT, then you might have already used LaMDA. It was trained on large amounts of text data to generate context-aware and semantically meaningful responses to conversational queries.

Predicting what the next word would be in a given context is part of the training for LaMDA. It is quite similar to GPT-3. Nevertheless, LaMDA is able to anticipate entities, actions, and events inside a discourse in addition to predicting individual words. Because of this feature, LaMDA is extremely versatile and suitable for a variety of applications. Some examples of these applications include customer service, virtual assistants, and chatbots.

When compared to more conventional conversational models, LaMDA has many advantages. It is able to process queries in an open domain and produce answers that make sense. In conclusion, LaMDA can produce many solutions to a problem and rank them according to quality.

What is Google LaMDA & Bard?

Google’s latest artificial intelligence model, dubbed “Google LaMDA,” is designed to have natural-sounding conversations. Similar to OpenAI’s LaMDA, Google’s LaMDA is trained on massive volumes of text data. It is meant to generate discourse that sounds natural.

It is well known that ChatGPT competes with Google’s crown jewel, the search engine. The growing popularity of ChatGPT, as well as Microsoft’s recent investment in it, have prompted Google to look for new ways and possibilities. To combat the rise of ChatGPT, Google recently announced plans to launch its own version of a chatbot. This new chatbot, dubbed Bard, is designed to provide users with a comprehensive conversational experience.

Sundara Pichai, Google’s CEO, announced the existence of Bard on Google’s own blog yesterday. The blog post went over the features and benefits of Bard, a new AI-powered assistant that can learn from user interactions and generate more natural responses.

Bard vs ChatGPT: How ChatGPT might effect?

The development and release of Google LaMDA & Bard may have an impact on the use and popularity of OpenAI’s ChatGPT in a variety of ways.

- The market for conversational AI models may experience more competition, due to the introduction of Bard.

- A further potential effect is on the creation and evolution of conversational AI models. With Google’s involvement, the technology may improve much further, which would be advantageous to both ChatGPT users and the creators of such models.

- LaMDA may also outperform competing models in terms of performance, accuracy, and user experience due to Google’s extensive resources and artificial intelligence skills. This might cause the market to move away from ChatGPT-style models and towards Google LaMDA.

Google’s Bard: How to Access?

Bard or Google LaMDA (Language Model for Dialogue Applications) are not currently accessible to the general public for use. It is currently accessible as a research tool for scholarly and scientific purposes. In the future, Google might make LaMDA accessible to the general public through cloud-based services or as a component of their API offerings. In this situation, Google LaMDA would likely be accessible via a developer platform or API, with price and usage based on usage volume and other variables.

If you’re interested in adopting conversational AI for your own projects or applications. There are numerous different models and tools available, including OpenAI’s ChatGPT, which can be accessed using the OpenAI API.

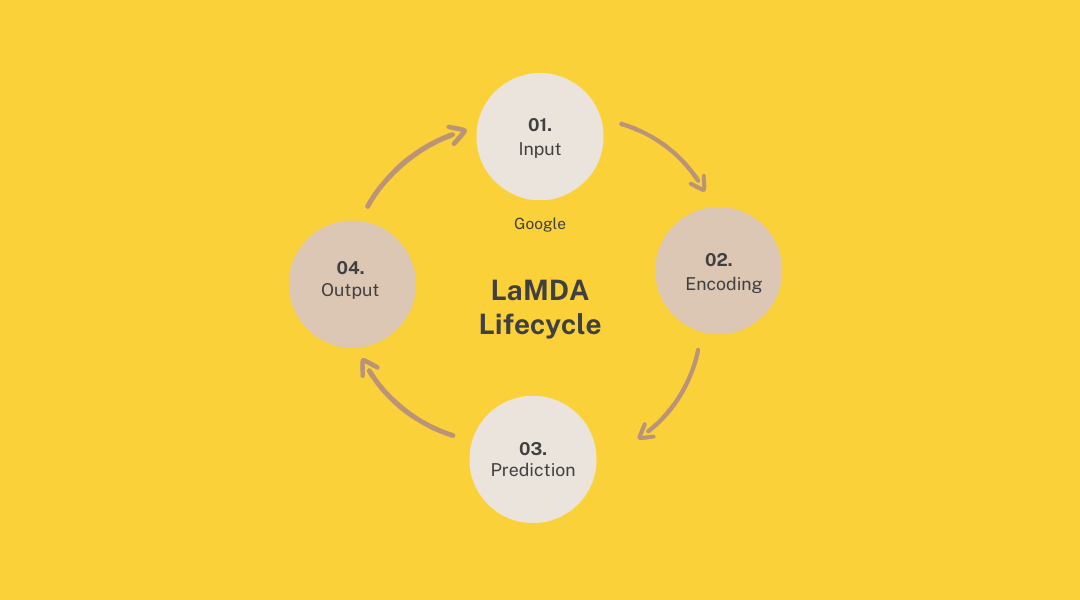

How LaMDA Works?

Google’s LaMDA works similar to open AI’s LaMDA. To understand how both works let’s look at the procedure of how the internal mechanism of LaMDA works:

- Input: An input is given to LaMDA in the form of a conversational cue, which may be a question, a statement, or some other kind of query.

- Encoding: The input is encoded into a numerical representation called an embedding that accurately represents its meaning and context.

- Prediction: Using its training, LaMDA can make predictions about the upcoming conversational term, entity, action, or event based on the input embedding.

- Model output: A response is produced depending on the model’s predictions. The model’s output is the decoded response in human-understood language.

![Sora Open AI: The AI Video Generating Tool [Explained]](https://curioussteve.com/storage/2024/03/Open-AI-Sora-Explained-120x86.webp)

![Sketch.metademolab: Bring children’s drawings to life [Explained]](https://curioussteve.com/storage/2023/08/Sketch.metademolab-120x86.webp)

![Sora Open AI: The AI Video Generating Tool [Explained]](https://curioussteve.com/storage/2024/03/Open-AI-Sora-Explained-350x250.webp)

![Sketch.metademolab: Bring children’s drawings to life [Explained]](https://curioussteve.com/storage/2023/08/Sketch.metademolab-350x250.webp)

Discussion about this post